What it is and why it matters

ETL gained popularity in the 1970s when organizations began using multiple data repositories, or databases, to store different types of business information. The need to integrate data that was spread across these databases grew quickly. Over time, the number of data formats, sources and systems has expanded tremendously. ETL has become the standard method for taking data from disparate sources and transforming it before loading it to a target source, or destination.

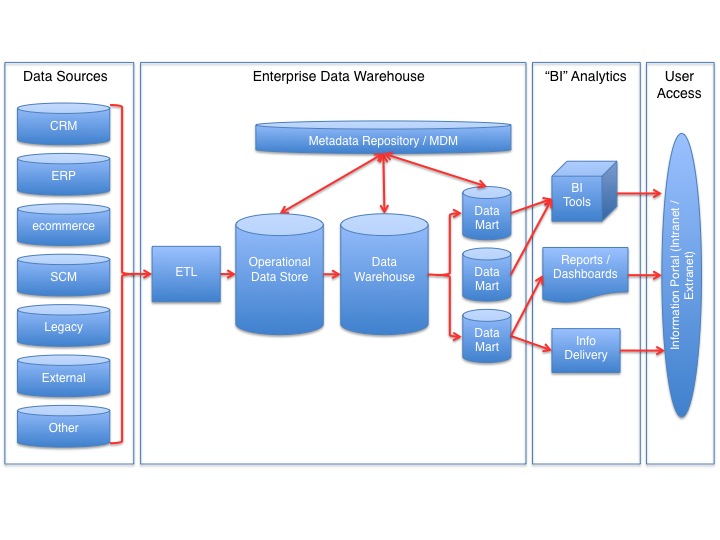

ETL stands for extract, transform and load. ETL is a type of data integration process referring to three distinct, but interrelated steps used to synthesize raw data from its source to a data warehouse, data lake or relational database. From various data sources (that are not optimized for analytics), ETL loads data first into the staging server and then into the target system, whereas extract, load and transform (ELT) loads data directly into the target system. ELT is an alternate but related approach designed to push processing down to the database for improved performance.

The three distinct steps of ETL

Extract

During extraction, ETL identifies the data and copies it from its sources, so it can transport the data to the target datastore. The data can come from structured and unstructured sources, including documents, emails, business applications, databases, equipment, sensors, third parties, and more.

Transform

Because the extracted data is raw in its original form, it needs to be mapped and transformed to prepare it for the eventual datastore. In the transformation process, ETL validates, authenticates, deduplicates, and/or aggregates the data in ways that make the resulting data reliable and queryable.

Load

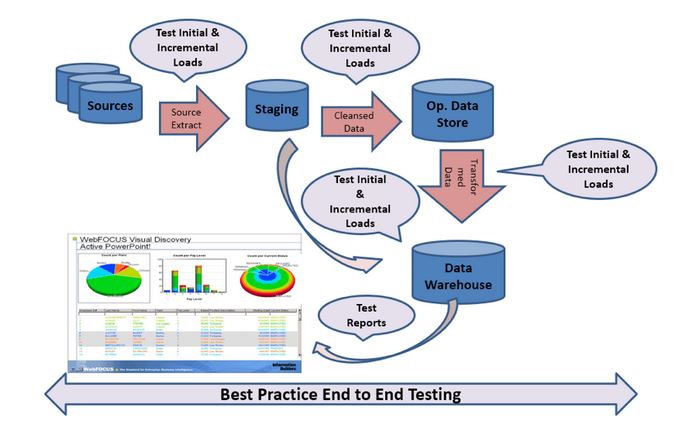

ETL moves the transformed data into the target datastore. This step can entail the initial loading of all the source data, or it can be the loading of incremental changes in the source data. You can load the data in real time or in scheduled batches.Benefits of ETL

- Transforms data from multiple sources and loads it into various targets

- Provides deep historical context for businesses

- Allows organizations to analyze and report on data more efficiently and easily

- Increases productivity as it quickly moves data without requiring the technical skills of having to code it first

- Evolves and adapts to changing technology and integration guidelines